AI gets pulled into climate discussions in some pretty sensational ways. Some people claim it’s a powerful tool that could help solve some of the world’s most intractable climate issues. Others say it’s just another energy-guzzling technology that’s silently doing more harm. The truth is that the situation is a little more complex than either of those headlines makes it sound.

AI certainly has an energy appetite. Data centers are running 24/7, models need chips with lots of horsepower, and computing demand is increasing fast. But emphasizing only the energy consumption overlooks an important other half of the equation. The same technology that’s consuming energy is also helping other industries consume less of it.

From finding methane leaks that we can’t see otherwise, to optimizing wind farms and grids and designing more efficient new infrastructure, AI is finding some surprising ways to contribute to climate action.

This report assembles some of the data and research that’s emerged in recent years. We’re not trying to traffic in hype or hysteria here, just simple math. And what the numbers seem to say is that AI might be a little bit of both: contributor to and solution for some of the same problems.

The Climate Upside of AI: Why the Energy Debate Is More Complicated Than It Looks

The longer you read or write about artificial intelligence and climate change, the more you’ll notice a particular exchange playing out over and over. Someone will float an idea about AI cutting carbon emissions or streamlining energy use. And right away someone else will pop up with a: “Yeah, but what about all the electricity data centers consume?”

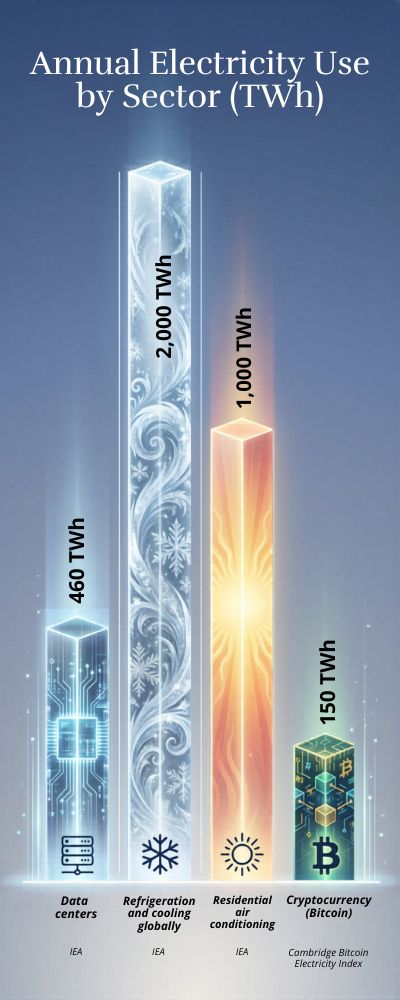

Truth is, you can’t have one conversation without the other. Data centers are power-hungry. There’s no getting around that. But that doesn’t mean we should reduce this conversation to a simplistic battle between good and evil. AI is neither. According to the International Energy Agency, the world’s data centers currently consume around 460 terawatt-hours (TWh) of electricity each year, or roughly 2% of global electricity demand.

The same IEA data-center outlook suggests electricity demand from data centers could climb to somewhere between 620 and 1,050 TWh by 2026. Even at the upper bound of that forecast, data centers would consume roughly 3–4% of global electricity, given that global electricity consumption is about 29,000 TWh per year, according to energy data compiled by the World Bank. That’s a jump, to be sure. But it’s not a digital equivalent of suddenly building 1000 coal-fired power plants.

A Quick Reality Check

One way to keep all this in perspective is just to compare things. Otherwise, those terawatt-hours can start sounding like science fiction. For example, air conditioning uses several times more electricity than the entire global data center industry. Refrigeration uses more than that.

| Sector | Annual Electricity Use (TWh) | Source |

|---|---|---|

| Data centers | ~460 TWh | IEA |

| Refrigeration worldwide | ~2,000 TWh | IEA |

| Residential air conditioning | 1,000+ TWh | IEA |

| Bitcoin mining | ~120–150 TWh | Cambridge Bitcoin Electricity Index |

No one writes worried op-eds about the climate implications of AC units every July. We just turn down the thermostat when we get hot. We humans are a little strange that way. We get worked up about the shiny new tech, while ignoring the mundane technologies quietly chewing through energy in the background.

The Efficiency Story You Never Hear

But there’s another part of this story worth paying more attention to: computing is getting way more efficient. A recent paper in Nature Energy estimates that between 2010 and 2018, global data center workloads expanded almost 6-fold, but electricity consumption only rose by around 6%.

That’s a mind-bending statistic when you pause to think about it. Six times more computing power… on nearly the same amount of electricity. That didn’t happen by accident. Over the last decade, engineers worked hard to redesign chips, improve cooling systems, and otherwise wring more performance per watt. To simplify, computers have quietly become way better at doing more with less energy.

The Question We’re Not Asking

The AI vs climate debate tends to center on a single number: how much electricity AI is using. But that may be looking at this the wrong way. Perhaps a more interesting and useful question to explore, maybe the question we should be asking, is this: what does AI enable the rest of the economy to do with energy? If AI helps make it easier for the power grid to absorb renewables, that’s important.

If AI can spot methane leaks in oil & gas systems that people can’t, that’s important too. And if AI can shave even a few percent of global fuel use in logistics, the emissions savings could be huge. None of that eliminates the energy footprint of AI. But it makes the story messier. And to be honest, when it comes to climate and tech, the messier stories are usually the most honest ones.

The Electricity Question: How Much Power Does AI Really Use?

You pose a question to an artificial-intelligence model. The response arrives in a flash. It’s simple, even mystical. Occasionally, a nagging question emerges: What’s happening behind the scenes? Somewhere, perhaps thousands of miles away, an array of servers hums in perpetual motion. These computers don’t run on imagination and inquisitiveness. They run on electricity. And a lot of it.

The International Energy Agency estimates that data centers worldwide consumed around 415 terawatt-hours (TWh) of electricity in 2024, or approximately 1.5% of all the electricity generated on Earth. That amounts to roughly the same energy usage as a nation the size of Sweden. (The numbers are from the International Energy Agency analysis on AI and energy demand.) Suddenly, the “invisible internet” isn’t so invisible.

The Power Behind the Screens

| Metric | Estimated Value | Source |

|---|---|---|

| Global data-center electricity use (2024) | 415 TWh | IEA |

| Share of global electricity | ~1.5% | IEA |

| U.S. data-center electricity use | 183 TWh | Pew Research |

| Share of U.S. electricity | ~4% | Pew Research |

Now here’s the twist: All that electricity isn’t going toward AI. Data centers also power your cloud storage, Netflix viewing, and constant emails.

However, AI is quickly becoming the most energy-intensive computing application. The detailed breakdown appears in a Pew Research analysis of data-center electricity demand.

And here’s the wild part: According to the IEA, data-center electricity consumption could balloon to around 945 TWh by 2030 if current trends continue. (That projection is from the IEA report on AI-driven electricity demand.) That’s more than doubling in only a few years.

Training AI Is Where the Energy Goes

The obvious assumption is that AI consumes electricity when users ask a chatbot a question. But the real energy draw is in training AI models. Training a large AI system can consume as much as 50 gigawatt-hours of electricity, according to estimates compiled in the Global Electricity Initiative report on data-center energy use.

That’s about the amount of electricity that 40,000 homes consume annually. So it’s not your late-night history questions that are gobbling up all the energy. It’s the massive training sessions that occur before you log in.

The Growth That’s Raising Eyebrows

Electricity planners are taking notice. In the United States, electricity demand is expected to reach record highs in 2026 and 2027, in part because of the rapid growth of AI data centers.

And globally, data-center electricity use has been increasing at an annual rate of about 12%, according to the Carbon Brief analysis of AI energy trends. Engineers call that “rapid growth.” The rest of us might say, “Whoa.”

So… Is AI the Problem?

Here’s where things get a little complicated. Despite all the headlines, some estimates suggest machine learning amounts to less than 0.2% of global electricity use today, based on figures discussed in the United Nations Regional Information Centre overview of AI energy use.

In other words, AI isn’t eating all the world’s energy. At least, not yet. But it is growing quickly. And one thing we know about technology is that it doesn’t stay small for long.

Where This Might Be Headed

There are basically three ways this story could play out:

| Scenario | What Happens |

|---|---|

| Efficiency improves | New chips reduce power per AI task |

| Clean energy scales | Data centers run mostly on renewables |

| Demand explodes | AI becomes a major electricity driver |

Tech companies are already aware of the stakes. Many of them are already among the world’s largest buyers of renewable energy, according to the Global Electricity Initiative data-center energy report.

So yes, AI requires electricity. A lot of it. But the real question isn’t so much how much energy it uses. The real question is whether we can be smart enough to power our smart machines.

AI vs. Emissions: When More Computing Leads to Less Carbon

It’s a question I hear a lot these days: does AI’s reliance on data centers mean it’s also bad for climate change? It’s a fair enough question. I, myself, wondered the same thing the first time I read about giant server farms. It sounds like a digital energy plant. But the answer isn’t so black and white. AI certainly uses electricity. I won’t spin this. But AI also quietly helps other industries save energy. That’s the thing.

A report from the International Energy Agency on AI and energy systems finds that the adoption of AI technologies across the energy sector could reduce global greenhouse-gas emissions by up to 4% by 2030 through improvements in energy efficiency. Four percent doesn’t sound like a lot. But in climate policy, that’s actually a pretty big deal.

Where Emissions Are Actually Reduced

Most of the reductions don’t come from Moonshot ideas like carbon capture and clean cement. They come from pretty mundane things like routes for shipping vessels, heating in buildings and smart power grids. The kinds of things that nobody posts on Instagram. AI is good at detecting inefficiency. It’s like that friend who spots every speck of dust in the house.

| Sector | Potential Efficiency Gain | Source |

|---|---|---|

| Energy grids | 10–20% efficiency improvements | IEA |

| Transportation logistics | Up to 15% fuel savings | McKinsey |

| Buildings and HVAC systems | 20–30% energy reduction | World Economic Forum |

Scientists quoted in the World Economic Forum’s discussion on the role of AI in the energy transition say that AI can help predict the output of renewable energy. That means grid operators don’t have to leave a fossil-fuel plant spinning to supply power when solar isn’t available. Less uncertainty. Less waste.

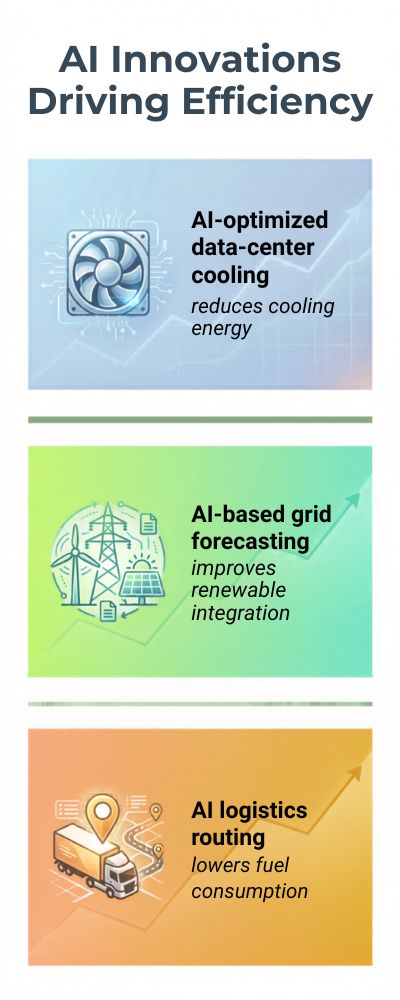

When AI Helps Clean Up Its Own Act

One of the examples I find a bit funny is AI making data centers more energy-efficient. Google used DeepMind to save on cooling energy by about 40%, according to a report quoted in Nature’s article on AI-driven energy efficiency. Cooling accounts for a big share of data center energy consumption, so that’s a big deal. It’s like teaching a voracious teenager how to diet.

| Application | Impact |

|---|---|

| AI cooling optimization | ~40% reduction in cooling energy |

| Grid demand forecasting | Better renewable integration |

| Logistics optimization | Reduced fuel consumption |

The Part That Still Isn’t So Tidy

Now, don’t get me wrong. Training AI models can require tens of gigawatt-hours of electricity, according to a report published by the Carbon Brief on data center energy and emissions.

That’s a lot of power. And if thousands of companies are training large models, that demand adds up. So the climate equation starts to look like a simple balancing act: the energy AI demands versus the emissions it prevents.

The Topline

The way I see it, AI isn’t intrinsically clean or dirty. It’s just a technology. A messy one. Still evolving. If we use it to make power grids, transportation and industries more efficient, the emissions savings should easily outstrip the energy it consumes.

But if we build ever-larger AI models just because we can… well, that’s another story. Technology doesn’t fix problems by itself. Humans do. And in the case of AI and climate, that’s a story still playing out.

The Methane Breakthrough: How AI Is Helping Detect the Most Powerful Greenhouse Gas

![]()

Methane is odd. Not odd in a funny way, but odd in a weird way. It receives a fraction of the attention of carbon dioxide, but it packs 80 times the heat-trapping punch over 20 years, according to the U.S. Environmental Protection Agency’s explanation of global warming potentials.

So, you know, kind of a big deal. The issue is that methane leaks are invisible. They occur in pipelines, landfills and livestock farms, in general, invisible puffs of gas escape into the atmosphere.

For years, that meant that much of it was just … missed. Scientists now estimate methane is responsible for around 30% of global warming since the Industrial Revolution, based on findings in the International Energy Agency’s Global Methane Tracker. When I first read that, I stopped for a second. 30% from a gas most of us hardly hear about? Wild.

Satellites, Algorithms, and a Bit of Detective Work

This is where things get interesting. Satellites are constantly scanning the atmosphere, gathering huge amounts of data. The problem is there’s too much of it for people to go through. So AI takes over. Machine-learning models can look at satellite images and identify methane plumes that people might easily miss.

They have already helped find thousands of previously unknown leaks, according to the United Nations Environment Programme’s overview of AI methane detection. It’s like having an incredibly focused intern staring at the Earth all day going, “Wait … shouldn’t be here.” Once those leaks are identified, companies can fix them, many with very simple repairs.

| Key Methane Facts | Estimate | Source |

|---|---|---|

| Heat-trapping power vs CO₂ (20-year scale) | ~80× stronger | EPA |

| Share of warming since Industrial Revolution | ~30% | IEA |

| Main sources | Fossil fuels, agriculture, waste | UNEP |

The Curious Case of the “Super-Emitters”

Here’s the thing that surprises a lot of people: Methane leaks are often concentrated in a surprisingly small number of places. Research summarized in the Science journal study on methane super-emitters shows that a small percentage of sites produce a disproportionate amount of leaks.

In simple terms? Fix a few big ones and you’re really getting somewhere. That’s actually kind of heartening. A lot of climate problems feel insurmountable, but this one is occasionally just about locating a few big leaks and tightening some bolts.

| Detection Method | Strength |

|---|---|

| Manual inspections | Slow, limited coverage |

| Satellite monitoring | Global visibility |

| AI image analysis | Fast identification of leaks |

Why This Might Be One of the Fastest Climate Wins

Methane behaves differently than CO2. It doesn’t hang around the atmosphere for centuries. If you curb emissions, the climate sees benefits relatively quickly.

According to the UN Environment Programme’s Global Methane Assessment, cutting methane emissions by roughly 45% this decade could prevent about 0.3°C of global warming by 2045. That’s not nothing.

In climate terms, that’s massive. AI isn’t a silver bullet that will fix everything. But when it helps us find leaks we couldn’t find before, it turns an invisible problem into one we can fix. And, honestly, that feels like progress.

From Power Grids to Wind Farms: How AI Is Making Clean Energy More Efficient

At its core, renewable energy is straightforward: construct solar panels and wind turbines, and hook them up to the grid. Easy peasy, lemon squeezy. Except it’s not. As it turns out, the sun doesn’t shine all the time, the wind doesn’t always blow, and people use power at all hours of the day. Managing that unpredictability is actually a bit tricky.

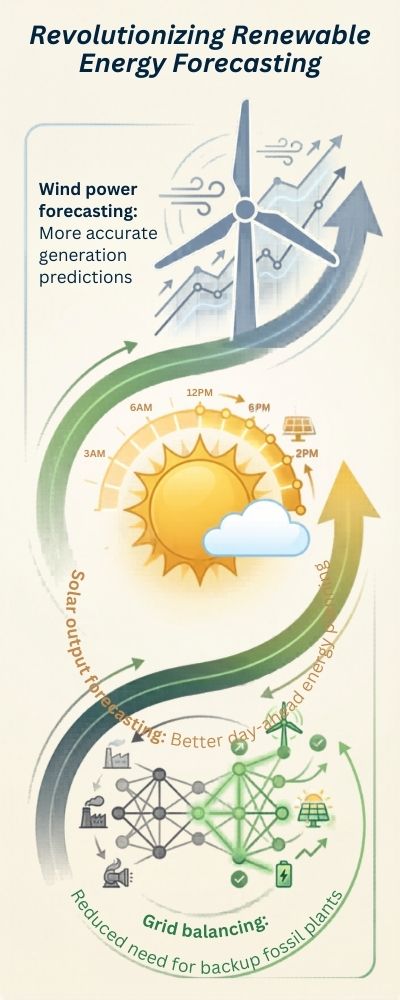

So artificial intelligence (AI) has started to play a supporting role, not as the lead actor, but more as the logistics coordinator behind the scenes. The International Energy Agency found that the use of AI for forecasting and optimisation can help integrate renewables onto the grid more efficiently and reduce energy losses.

The Forecasting Problem

Solar panels and wind turbines rely on the weather. Before, grid operators had to rely on pretty basic modeling and a lot of finger crossing to predict how much energy they would generate. Now AI can take all sorts of data from satellites and weather stations and combine it with data from sensors in the wind turbines and solar panels themselves, to make more accurate predictions.

The World Economic Forum wrote that “Advanced forecasting techniques can make renewable generation and grid operations more efficient.” And the better you can forecast how much energy is going to be generated, the fewer fossil-fuel plants sitting around as emergency backups.

| Area of Use | Improvement from AI |

|---|---|

| Wind power forecasting | More accurate generation predictions |

| Solar output forecasting | Better day-ahead energy planning |

| Grid balancing | Reduced need for backup fossil plants |

Smarter Wind Farms

Wind turbines are lovely and graceful spinning in the breeze, but actually there is a fair bit of complex mathematics going on behind the scenes. Wind turbines have to constantly adjust the angle of their blades depending on the wind speed and direction. AI can look at sensor data and optimise the angle in real time.

And according to Nature, this can “boost the output of wind farms by 5-10%”. That may not sound like a lot, but across hundreds of turbines the extra electricity adds up quickly. Essentially AI helps wind turbines get a bit more bang for each gust of wind.

| Optimization Technique | Result |

|---|---|

| AI turbine control | Higher energy yield |

| Predictive maintenance | Reduced downtime |

| Weather-driven planning | Improved grid stability |

Power Grids Are Getting Smarter Too

Power grids are one of the most complex systems we’ve ever built. Electricity demand varies minute to minute, and renewable energy supply does the same. AI can help utilities predict when demand is going to be high, find the most efficient way to route power, and prevent overloads. According to McKinsey “Digital optimisation techniques can reduce energy losses and operating costs”.

A Small Boost That Adds Up

And it’s not like any of these improvements are massive on their own. We’re talking 5%. 10%. But energy systems are huge. So when you make small improvements to the efficiency of thousands of turbines, farms and grid systems, it starts to add up. Clean energy is already gaining traction. AI is just one tool that helps it run a little bit better, and sometimes that’s all you need.

The Efficiency Revolution: AI as a Tool for Using Less Energy, Not More

Artificial intelligence and energy. In the same breath. Someone will surely jump in with, “But AI will increase energy consumption, won’t it?” Well, yes, training massive models and data centers require energy, of course. Yet here’s the less-reported flip side: that same AI can make entire systems more energy-efficient. Which means less overall energy waste.

The International Energy Agency’s report on energy and AI points out that digitalization, including AI, can improve energy efficiency in multiple sectors, from manufacturing to transportation to power generation. Yes, AI will consume energy. But it can also help the rest of the economy consume less.

The Quiet War on Energy Waste

Energy waste is everywhere. In every-day systems. When buildings heat or cool empty spaces. When delivery trucks take indirect routes.

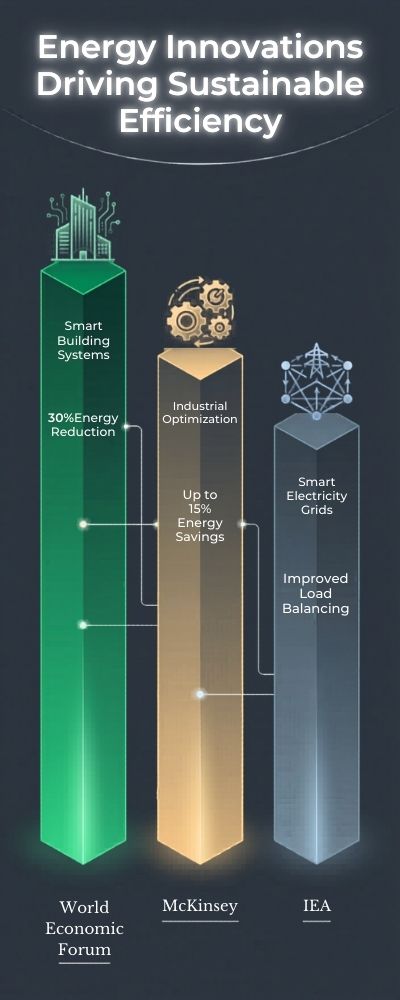

| Sector | Potential Efficiency Gain | Source |

|---|---|---|

| Smart building systems | 20–30% energy reduction | World Economic Forum |

| Industrial optimization | Up to 10–15% energy savings | McKinsey |

| Smart electricity grids | Improved load balancing | IEA |

When power grids generate more electricity than they need, just to be safe. AI is extremely good at identifying those inefficiencies. For instance, a study cited in the World Economic Forum’s report on AI and the energy transition found that AI-managed building systems can optimize heating, cooling, and lighting based on occupancy and weather patterns. In other words, fewer lights are left on in empty meeting rooms.

Smarter Buildings, Smaller Bills

The built environment is one of the biggest energy hogs of all. The International Energy Agency’s report on building energy use estimates that buildings consume some 30% of all energy worldwide. That’s a lot of room for optimization.

AI can mine sensor data, temperature, occupancy, humidity levels, and continually tweak energy use. The outcome often sounds almost laughably straightforward: the building consumes energy only when it needs to.

| AI Application | Benefit |

|---|---|

| Smart HVAC control | Reduced heating/cooling waste |

| Occupancy-based lighting | Lower electricity use |

| Predictive maintenance | Fewer system failures |

It isn’t sexy technology. But it works.

Industry Gets an Efficiency Upgrade

Manufacturing and industrial processes are getting similar upgrades via AI. Machine learning can monitor equipment performance and flag areas of inefficiency, allowing for course corrections before issues become major problems.

According to a report by McKinsey on AI in the energy sector, digital tools can lower the operational energy use of manufacturing facilities. Sometimes those gains come from something simple like optimizing machine run times, or identifying the early warning signs of equipment failure.

Small Gains, Big Impact

The thing about energy efficiency? Progress rarely comes in heroic leaps. Instead, it’s a series of incremental gains. Here and there. A 5% gain in efficiency over here. A 10% gain over there. In cities, factories, transportation systems, and grids, those marginal gains start to add up to something significant.

AI isn’t going to single-handedly fix the energy problem, but it is turning out to be great at eliminating waste. And let’s be honest: in a world that gobbles energy the way ours does, eliminating waste may be the smartest thing we can do.

The Hidden Climate Cost of Computing—and How Fast It’s Falling

You don’t think about it, but every time you watch a video or send a file or ask an AI a question, power is being consumed. Lots of power. Somewhere, a server is blinking, a cooling system is whirring, and electricity is being pumped through it like water through a municipal water supply.

As the International Energy Agency noted in its recent report on energy and AI, data centers worldwide used about 415 terawatt-hours of electricity in 2024, about 1.5 percent of total electricity use. That is nothing to sneeze at.

It’s roughly equivalent to the annual power consumption of some medium-sized countries. And yes, that has an impact on the climate. But here is the thing: That impact is changing. Fast.

An Inconvenient Surprise

This shouldn’t be news to you, but computing is using more energy than ever. What might be news, though, is how fast that demand for energy is (and isn’t) growing. It’s been rising for 20 years, but not nearly as quickly as the computing demand itself.

As one group of researchers put it in an analysis of computing efficiency published in Nature, “we observe a 1 million-fold reduction in computational energy intensity since the invention of the electronic computer.” In other words, computers are doing a lot more with a lot less.

To put it another way, the mileage of computers has improved a lot over the years. That isn’t necessarily surprising. But what might surprise you is how much faster this change has been than it has for, say, cars. Efficiency Improvements in Transportation and Computing.

| Metric | Early 2000s | Today |

|---|---|---|

| Server energy efficiency | Lower | Dramatically improved |

| Data-center cooling systems | Less optimized | AI-assisted efficiency |

| Energy per computation | High | Rapidly declining |

You Have the Right to Remain Cool

Cooling is a big part of it. Cooling systems in data centers used to be among the biggest power hogs in the entire operation. That is starting to change. For example, a few years ago Google’s AI system, DeepMind, reportedly cut cooling energy use in its data centers by about 40 percent, as Nature reported. Cooling isn’t the only thing getting more efficient, though.

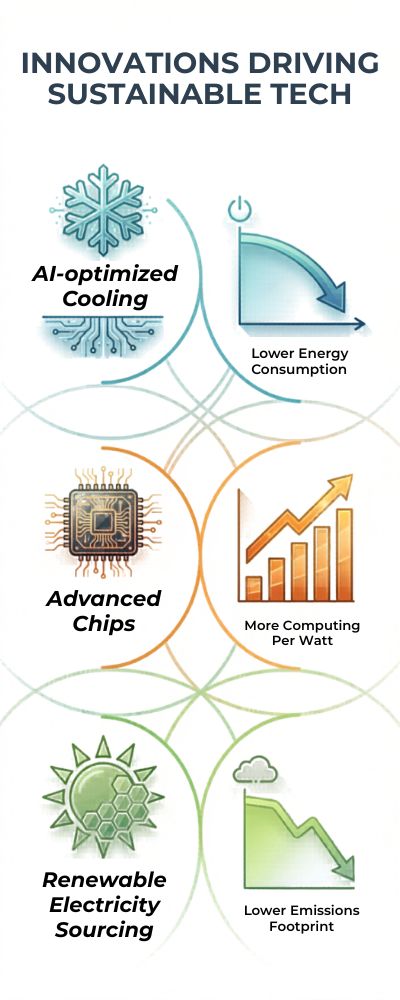

| Innovation | Impact |

|---|---|

| AI-optimized cooling | Lower energy consumption |

| Advanced chips | More computing per watt |

| Renewable electricity sourcing | Lower emissions footprint |

The Big Picture

This shouldn’t be news to you, but demand for computing power isn’t going away. AI models are getting bigger. Cloud computing is on the rise. Streaming is taking over the world. This isn’t going to slow down. But the power usage per task is. Improvements in chips, cooling, and data center design are making computers more efficient, as the researchers concluded in the Nature analysis.

Further, as the IEA noted in its analysis of data centers and data transmission networks, “data centre operators have become some of the largest corporate buyers of renewable electricity.” Yes, there is still a climate cost from computing. Don’t pretend otherwise.

The good news is that the technology itself is helping to reduce that cost. If this trend keeps going, digital could be a lot cleaner than it looks right now.

Training vs. Using AI: Why Inference May Matter More Than Training

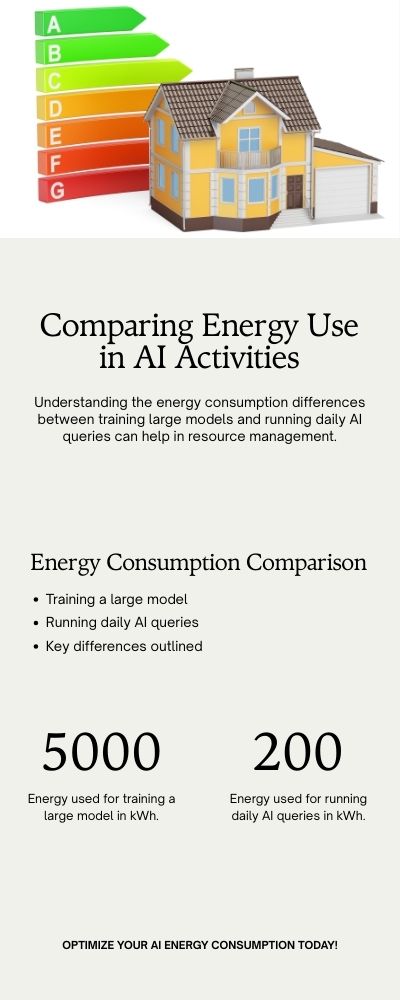

When it comes to the carbon footprint of AI models, the focus is usually on the training process. Huge clusters of GPUs running for weeks, enormous electricity bills, and a team of caffeinated engineers. The works. Sure, training deep models is computationally intensive.

According to the analysis on AI energy use published in Carbon Brief, training a single model can use tens of gigawatt-hours of electricity. Not a negligible number. But, the thing is, training is a one-off process. Inference is a continuous one.

Inference (The Silent Power Hog)

After training is complete, a model is deployed. People make predictions with it. Generating images. Summarizing documents. Millions, often billions, of times a day. This is called inference. It’s where AI models generate predictions.

According to research referenced in the International Energy Agency’s report on energy and AI, the total energy used for inference by millions of users eventually adds up and surpasses the initial training energy.

| AI Stage | What Happens | Frequency |

|---|---|---|

| Training | Model learns from large datasets | Rare |

| Inference | Model generates responses to users | Constant |

While training uses a lot of energy, inference uses some energy, but all the time.

Small individual contributions, huge collective impact

The individual energy footprint of a single AI prediction is minimal. But there are many of them. If a million users are making a few requests per day, that’s a lot of predictions. According to an article in Nature on computing efficiency trends, as computing demands from AI continue to grow, more attention is being placed on optimizing inference systems.

| Activity | Energy Pattern |

|---|---|

| Training a large model | High energy, short period |

| Running daily AI queries | Lower energy, continuous |

Comparing it to boiling a huge pot of water once vs. keeping the kettle warm all day.

The importance of efficiency

Due to the continuous nature of inference, any improvements to efficiency here can have outsized effects. Already, there are semiconductor companies designing specialized chips to run AI predictions using far less energy per prediction.

As noted in a report by McKinsey on AI and energy systems, optimizing workloads and hardware to minimize operational energy usage is now a focus of many companies.

The Opportunity

Training is still important. It’s how the model learns. But I think when we only consider training energy, we’re only looking at half of the picture. Inference is where AI is used in practice. It’s the part that people interact with daily.

And realistically, I think that’s where the biggest opportunity for improvement probably lies.

The Future Data Centers: Can AI Help Build Its Own Low-Carbon Infrastructure?

Artificial intelligence is like a black hole: every search, chatbot interaction or video streamed ultimately gets sucked into a giant server facility, known as a data center. And the more intelligent computers become, the more energy they consume.

Globally, data centers already account for 1.5% of electricity demand, consuming some 415 terawatt-hours of electricity per year, the International Energy Agency said in its report, Energy and AI. That leaves a tantalizing question: can AI help design data centers that run cleaner?

The notion has a whiff of science fiction. Yet some of it is already a reality.

Intelligent Design

A data center’s design is only partly about the servers that power it. Cooling, air flow, the placement of computer chips and the routing of electricity can all influence energy losses.

Engineers are now using machine learning models to simulate thousands of designs before a spade even breaks the ground. Digital optimization tools can significantly improve the efficiency of energy infrastructure by modeling complex systems in advance, according to a report by consulting firm McKinsey, How AI can help the energy sector.

| Design Factor | Traditional Approach | AI-Optimized Approach |

|---|---|---|

| Cooling systems | Static engineering models | Adaptive simulations |

| Facility layout | Fixed configurations | Data-driven design |

| Energy distribution | Manual modeling | Predictive optimization |

Rather than guessing the optimal set-up, engineers can model thousands.

Cooling: the Silent Energy Hog

One of the biggest sources of energy losses in data centers is cooling. Servers produce a lot of heat, and keeping them at a stable temperature requires a lot of electricity.

Perhaps the most famous example is the work of Google, where AI from UK-based DeepMind reduced the amount of energy used for cooling by around 40%, according to a report in Nature, AI brings data centre cooling gains.

| Cooling Innovation | Result |

|---|---|

| AI temperature control | Reduced cooling energy |

| Real-time sensor analysis | Improved efficiency |

| Predictive maintenance | Lower downtime |

That not only reduces energy demand, but the carbon footprint of computing.

Renewable Power for Data Centers

Energy efficiency is only half the battle. Where the electricity comes from is just as important as the amount consumed.

Tech companies have become some of the world’s biggest buyers of renewable energy, the IEA said in a report, Data centres and data transmission networks. Wind farms, solar plants and long-term renewable energy contracts are increasingly linked to the development of new data centers.

| Strategy | Climate Benefit |

|---|---|

| Renewable power contracts | Lower operational emissions |

| Smart grid integration | Better renewable balancing |

| AI-based energy forecasting | Reduced energy waste |

In other words, the algorithms that enable digital services can also be used to manage renewable power.

A Technology Reinventing Itself

There’s something quietly profound going on here. AI requires huge server farms, which AI is also helping us to reinvent.

Smarter cooling. Better layouts. Cleaner power. Each of these is fairly unremarkable on their own. Together, though, they suggest a future where data centers are not only getting bigger, but vastly more efficient.

And if the technology keeps improving at its current rate, then the computers that power the digital economy may also design some of the cleanest infrastructure the world has ever known.

The Climate Math of AI: What the Numbers Suggest for the Next Decade

Artificial intelligence (AI) has a weird image: It’s either saving the world or subtly taking down the power grid. The reality, as is often the case, is somewhere in between. Currently, data centers around the world, which include those that run AI tasks, consume about 415 terawatt-hours (TWh) of electricity a year, which accounts for around 1.5% of global electricity demand, according to the International Energy Agency’s report on energy demand from AI and data centers.

| Metric | Today | Possible 2030 |

|---|---|---|

| Data-center electricity use | ~415 TWh | ~945 TWh |

| Share of global electricity | ~1.5% | Possibly ~3% |

That’s a pretty big number, but it’s worth keeping in perspective. Aviation, for instance, accounts for around 2-3% of global CO2 emissions, according to data summarized by the IPCC climate assessment. So AI isn’t currently dominating the emissions picture. But its future is what has everyone paying attention. AI workloads are growing fast, with new models, new applications, and millions of daily users driving up demand.

The IEA projects that global electricity demand from data centers could reach around 945 TWh by 2030 if demand continues to increase, more than doubling today’s use, according to the IEA’s analysis of future AI-driven electricity demand. That’s the kind of growth curve that makes energy planners sit up a little straighter.

Meanwhile, computing efficiency has been improving rapidly, perhaps more so than most people realize. Research summarized in Nature’s analysis of computing efficiency trends has found that better chips, software, and cooling have slashed the amount of energy required per computation. In other words, even as computing demand soars, the energy required per task continues to plummet.

| Efficiency Driver | Impact |

|---|---|

| New AI chips | Higher performance per watt |

| Data-center cooling optimization | Lower energy waste |

| Workload scheduling | Improved server utilization |

AI may also cut emissions

This is the piece of the puzzle that tends to get missed: AI can also help cut emissions in other sectors. AI applications like smart grids, logistics optimization, and building energy management can significantly improve efficiency.

Some estimates discussed in the World Economic Forum’s analysis of AI and climate solutions have suggested that digital optimization could cut emissions across industries by improving energy efficiency. So the climate equation isn’t just about the emissions AI generates, it’s also about the emissions AI prevents.

| Factor | Trend |

|---|---|

| AI demand | Rapidly increasing |

| Computing efficiency | Improving quickly |

| Energy sources | Gradually shifting to renewables |

All that math in one equation

So the climate math of AI basically boils down to three moving parts: If efficiency and clean energy keep improving faster than demand for computing power grows, AI’s climate impact could end up being relatively modest, or even positive in some areas. If demand outpaces improvements in efficiency, AI’s power consumption could end up getting much, much bigger.

The numbers don’t yet clearly point to one outcome or the other. Instead, they suggest a race between technological progress and energy demand, and the winner of that race will probably be determined over the course of the next decade.

Taking all these applications together, energy networks, methane detection, design optimization, efficiency gains, a trend begins to emerge. AI’s climate impact isn’t something you can capture with a single metric. Instead, it has two sides.

On the one hand, there’s the ever-increasing computing load. More models, more data centers, more power use. That trajectory isn’t going to change any time soon.

On the other hand, there’s a more subtle trend unfolding in parallel. More efficient grids, smart methane detection systems, automated buildings, more efficient wind turbines, etc. None of these developments are particularly spectacular. But climate change has never been about a single magic bullet. Instead, it’s about a million tiny tweaks adding up.

So the question isn’t so much about AI power use, it’s happening. Instead, it’s about whether the efficiency gains it enables in the rest of the economy will be able to keep pace with the power it itself consumes.

The answers so far are inconclusive, but they do point to one thing: if wielded properly, the same technologies that are revolutionizing computing might also revolutionize how we use power in the first place.

Sources:

International Energy Agency – Energy and AI / Data-center electricity demand: https://www.iea.org/reports/energy-and-ai

International Energy Agency – AI driving electricity demand from data centres: https://www.iea.org/news/ai-is-set-to-drive-surging-electricity-demand-from-data-centres-while-offering-the-potential-to-transform-how-the-energy-sector-works

International Energy Agency – Data centres and data transmission networks: https://www.iea.org/reports/data-centres-and-data-transmission-networks

International Energy Agency – Global Methane Tracker 2024: https://www.iea.org/reports/global-methane-tracker-2024

Intergovernmental Panel on Climate Change (IPCC) – Sixth Assessment Report (AR6): https://www.ipcc.ch/report/ar6/wg3/

U.S. Environmental Protection Agency – Understanding Global Warming Potentials: https://www.epa.gov/ghgemissions/understanding-global-warming-potentials

United Nations Regional Information Centre – How much energy does AI use?: https://unric.org/en/artificial-intelligence-how-much-energy-does-ai-use/

Carbon Brief – AI and data centre energy use in charts: https://www.carbonbrief.org/ai-five-charts-that-put-data-centre-energy-use-and-emissions-into-context/

Science – Methane super-emitters research: https://www.science.org/doi/10.1126/science.abj4351

Reuters – U.S. electricity demand forecasts and AI growth: https://www.reuters.com/business/energy/us-power-use-beat-record-highs-2026-2027-ai-use-surges-eia-says-2026-03-10/

Global Electricity Initiative – Data center energy consumption overview: https://www.globalelectricity.org/data-centers-energy-consumption/